Walk onto any factory floor that has been operating for more than two decades and you'll find a history lesson in industrial automation. A Siemens S7-300 from the late nineties communicating via MPI. A Fanuc robot controller speaking its own proprietary protocol. A modern Beckhoff system on EtherCAT. An MES running on an Oracle database. A historian collecting via OPC DA. A quality system exporting CSV files every shift.

This isn't unusual. This is normal. A mid-sized manufacturing plant typically operates with 30 to 50 different data formats and protocols, accumulated over years of incremental equipment purchases, vendor selections, and system upgrades. Each system made sense when it was installed. Together, they form a data landscape that resists any attempt at unified analysis.

Why Data Fragmentation Kills AI Before It Starts

AI models don't care about protocols. They need structured, time-aligned, semantically consistent data. But when a vibration signal comes from an OPC UA server in millisecond resolution, temperature data arrives via MQTT in five-second intervals, and quality results are logged in a relational database with batch-level granularity, the preprocessing burden is enormous. Just getting three signals into the same time series format can take weeks of engineering.

The fragmentation creates compounding problems:

- Semantic inconsistency — the same physical measurement labeled differently across systems ("spindle_temp" vs. "T_SP1" vs. "temperature_main_spindle")

- Temporal misalignment — systems running on unsynchronized clocks, making event correlation unreliable

- Access complexity — each data source requires different credentials, drivers, and query methods

- Schema drift — firmware updates and configuration changes alter data structures without warning

Every AI project that ignores these realities spends its first months fighting data infrastructure instead of training models. The technical debt accumulates silently until it becomes the dominant cost of every initiative.

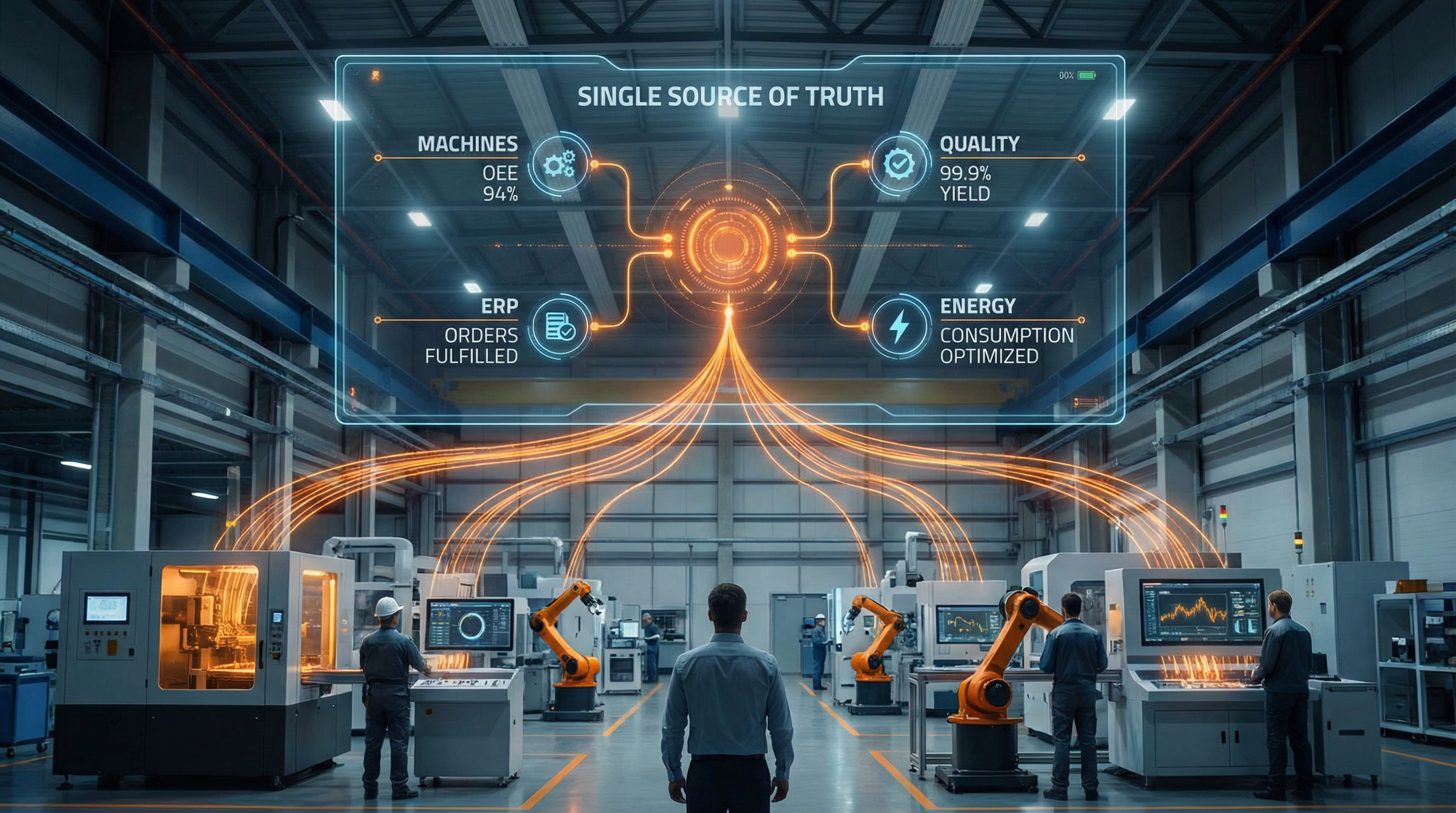

Building the Unified Truth

A unified data layer doesn't mean replacing existing systems. It means placing an abstraction layer on top that normalizes, aligns, and contextualizes data from every source into a consistent model. At RockQ, this layer ingests from OPC UA, MQTT, Modbus, S7, REST APIs, databases, and file-based sources — all through configuration, not code. Every signal receives a canonical name, a unit, a timestamp aligned to a common clock, and process context that makes it meaningful.

The impact is immediate. When a process engineer wants to compare spindle load patterns across three different CNC machines from three different manufacturers, the query is identical. When an AI model needs vibration, temperature, and tool-wear data for a combined health score, all three signals are already in the same format, same resolution, same time base. The engineer focuses on the analysis, not the plumbing.

From Single Machine to Cross-Plant Intelligence

The real power of a unified data layer emerges at scale. When every machine across every line speaks the same data language, analytics that were previously impossible become straightforward. Compare OEE across plants. Benchmark energy efficiency by product type. Identify why the same part fails quality checks on Line 3 but passes on Line 7. These insights require data that is not just accessible, but comparable — and that is exactly what normalization delivers.

Manufacturers who invest in a unified data layer don't just solve today's AI use case. They build the foundation for every future one. Each new machine connected, each new signal normalized, adds to an asset that grows more valuable with time. The alternative — building custom pipelines for each project — is a strategy that guarantees diminishing returns.