There is a pattern that plays out in manufacturing companies almost every time AI enters the conversation. A technology vendor gives an impressive demo. Leadership gets excited. A team is assembled with a mandate to "implement AI." They evaluate frameworks, debate model architectures, and spin up infrastructure. Six months later, there is a proof of concept that classifies something on a test dataset — but nobody can explain how it connects to a business outcome anyone cares about.

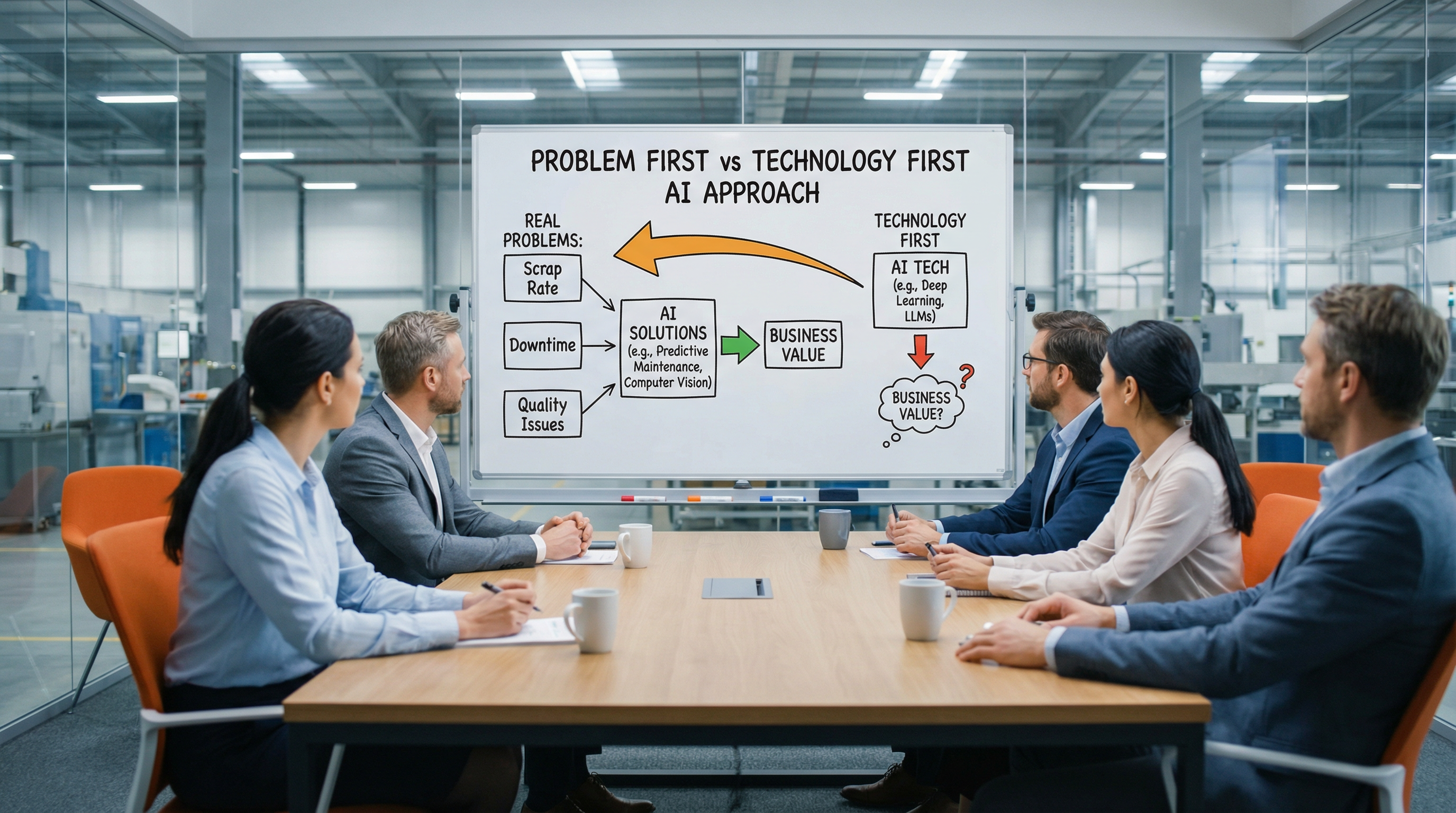

This is the technology-first trap, and it is remarkably common. The instinct to start with the tool rather than the problem feels logical — after all, you need to understand what AI can do before you apply it. But in practice, this approach leads to solutions searching for problems, and those solutions almost never survive contact with production reality.

The Technology-First Trap

Technology-first initiatives share a set of recognizable symptoms. The project starts with a general mandate like "use AI to improve quality" or "build a predictive maintenance system." The scope is broad because the problem hasn't been defined precisely. The team selects a use case that is technically interesting rather than operationally critical. And the success criteria are vague — improved accuracy on a validation set, a working dashboard, a demo that impresses the steering committee.

The result is predictable. The pilot works in a controlled setting but cannot be deployed because it doesn't integrate with existing workflows. Operators don't trust it because they weren't involved in defining what it should do. The ROI calculation is speculative because no one quantified the cost of the problem the model is supposedly solving. The project quietly stalls, and the organization concludes that AI is "not mature enough for our environment."

The real issue is not maturity. The issue is that nobody started by asking: what specific production problem costs us the most money, causes the most downtime, or creates the highest scrap rate? And can we quantify that cost precisely enough to justify an investment?

A Problem-First Framework That Works

The problem-first approach inverts the sequence entirely. Before any model is selected, before any data pipeline is discussed, the team identifies and ranks production problems by their measurable business impact. This is not a vague brainstorming session. It requires structured input from operations, quality, maintenance, and finance. The goal is a prioritized list of problems with concrete numbers attached.

A practical framework for ranking manufacturing AI use cases includes four dimensions:

- Quantified cost of the problem. What does this issue cost per month in scrap, rework, downtime, energy waste, or delayed shipments? If you cannot attach a number, the use case is not ready.

- Data availability. Is the relevant data already being captured, or does it require new sensors, integrations, or manual collection? Use cases where data already exists move faster and prove value sooner.

- Operational feasibility. Can the AI output be integrated into an existing decision workflow? If the model produces a prediction but there is no clear action an operator can take, the use case will not deliver value regardless of accuracy.

- Scope and containment. Can the use case be scoped to a single line, machine, or product family? Smaller scope means faster iteration, clearer evaluation, and lower risk.

When you score use cases across these dimensions, the right starting point usually becomes obvious — and it is rarely the most technically ambitious option. The best first AI use case is typically unglamorous: reducing false rejects on an inspection station, predicting a specific failure mode on a critical machine, or optimizing a single process parameter that directly affects yield.

Incremental Value Beats Transformational Ambition

The most successful manufacturing AI programs we have seen share a common trait: they start small, deliver measurable value quickly, and then expand. The first use case is chosen not because it will transform the factory, but because it will prove that the approach works and create organizational confidence. A predictive model that saves €80,000 per year on a single press line is not going to make headlines, but it builds the credibility needed to fund the next five use cases.

This incremental approach has a compounding advantage when built on the right foundation. At RockQ, the no-code platform and ML Studio mean that the infrastructure built for use case one — the connectors, the data preparation workflows, the deployment pipelines — is reusable for use case two and beyond. Each subsequent project takes less time and costs less, because the platform eliminates the repeated integration work that typically makes every use case feel like starting from scratch.

The manufacturers who succeed with AI are not the ones with the most sophisticated models or the largest data science teams. They are the ones who ask better questions at the start. Define the problem precisely. Quantify the cost. Scope the solution tightly. Deliver value, then expand. This is not a limitation of ambition — it is the only proven path to scaling AI in production environments where every decision must justify itself in operational terms.