Here's a pattern that repeats in nearly every manufacturing organization pursuing AI. The first use case — say, predictive maintenance on a critical CNC line — takes eight months. The team builds custom connectors to the PLC, writes ETL scripts for the historian, negotiates API access with the MES vendor, and hand-wires a dashboard. It works. Leadership is impressed.

Then comes use case number two: energy optimization on the same line. Different signals, but the same machines. You'd expect 80% of the infrastructure to carry over. Instead, the team discovers that the connectors were built for specific data points, the pipeline assumed a fixed schema, and the dashboard was hardcoded. They start over. Again.

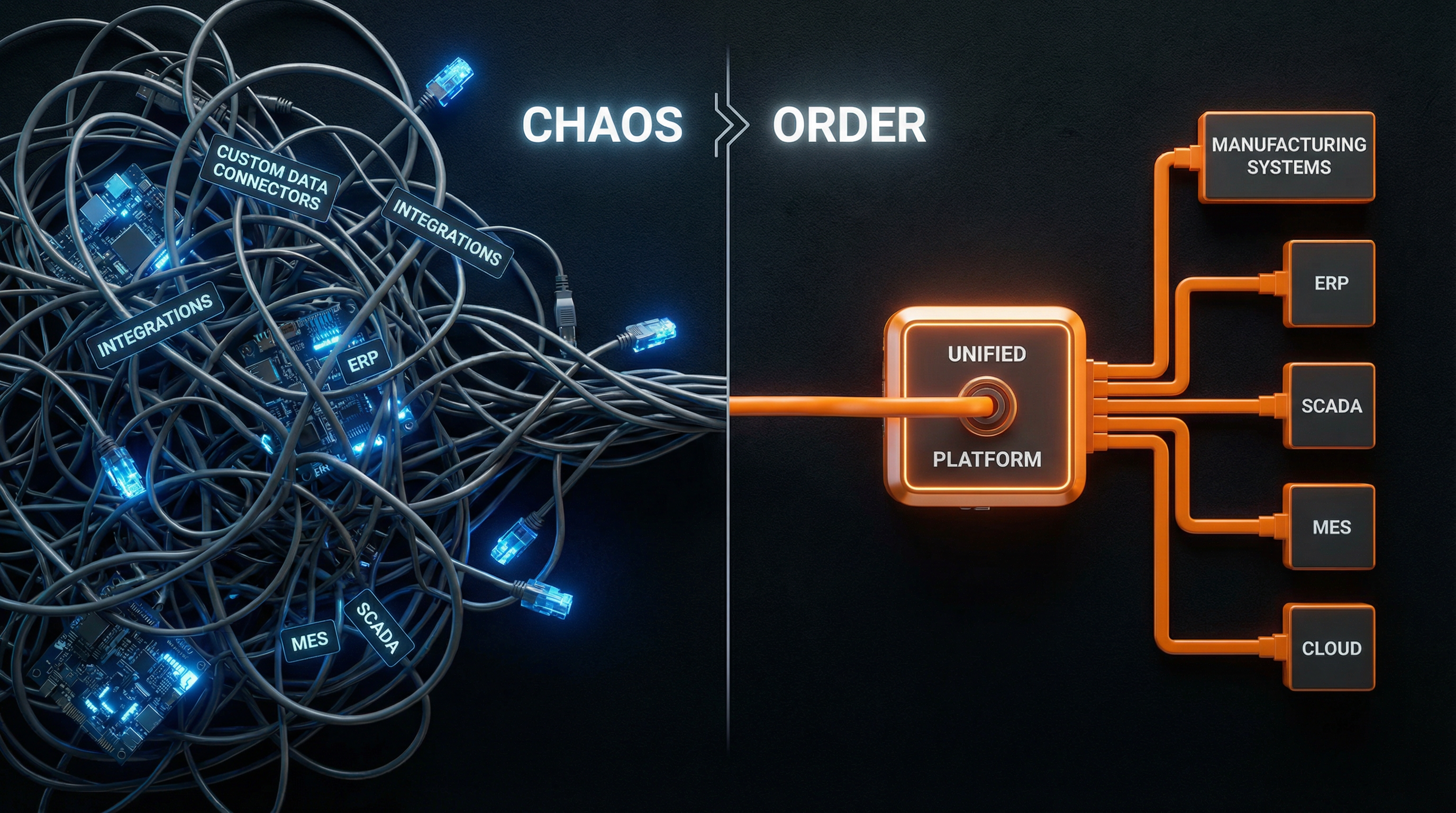

The Hidden Cost of Point-to-Point Integration

This is what we call the integration tax — the cumulative cost of rebuilding connectivity, data pipelines, and deployment logic for every new AI use case. It's not a one-time fee. It compounds. Each new project inherits the complexity of the last one without inheriting its infrastructure.

The numbers are sobering. In a typical manufacturing AI initiative, integration and data engineering consume 60–70% of the total project budget. The actual model — the part everyone thinks of as "the AI" — accounts for a fraction of the work. Yet organizations keep funding projects as if the model were the bottleneck.

The symptoms are predictable:

- Custom connectors rebuilt from scratch for every project

- Data pipelines that break when a machine firmware update changes a register address

- Deployment scripts tied to a specific server configuration

- Dashboards that can't be reused because they're hardcoded to one use case

- IT teams stretched thin maintaining a growing zoo of bespoke integrations

Why Point Solutions Make It Worse

Many manufacturers try to solve this by purchasing specialized point solutions — one vendor for vibration monitoring, another for vision-based quality, a third for energy analytics. Each comes with its own connectors, its own data model, its own dashboard. The integration tax doesn't decrease. It multiplies across vendors.

What's worse, these systems rarely talk to each other. The vibration data from the predictive maintenance tool can't inform the quality model. The energy consumption patterns can't be correlated with throughput metrics. Every insight stays locked in its own silo, and the cross-functional intelligence that actually drives operational improvement remains out of reach.

The Platform Alternative: Build Once, Deploy Many

The integration tax disappears when connectivity is treated as shared infrastructure rather than a per-project expense. At RockQ, every machine connection, data transformation, and deployment pipeline is built once and reused across all use cases. A connector to a Siemens S7-1500 PLC serves predictive maintenance, quality analytics, and energy monitoring simultaneously. A normalized data layer means new models can access any signal from any machine without rebuilding the plumbing.

This is what turns AI from an experiment into an operational capability. The first use case might take four weeks. The second takes two. By the fifth, teams are deploying in days — because the foundation already exists. The integration tax drops to near zero, and the marginal cost of each new use case becomes the cost of the AI logic itself, not the infrastructure surrounding it.

Manufacturers who treat integration as a strategic investment rather than a project cost don't just move faster. They build a compounding advantage: every use case makes the next one easier, cheaper, and more valuable.