Every manufacturing AI conversation eventually arrives at the same place: data. Not algorithms, not compute power, not talent — data. Specifically, the quality of the data that feeds every model, every prediction, every automated decision on the shop floor. And in most factories, that data is in far worse shape than anyone wants to admit.

The uncomfortable truth is that manufacturing generates enormous volumes of data every second, yet very little of it is usable for machine learning without significant cleanup. Sensors capture raw signals. PLCs log states in proprietary formats. MES systems record events with their own timestamps and conventions. The result is a sprawling landscape of information that looks complete on the surface but falls apart the moment you try to train a model on it.

The Four Silent Killers of Manufacturing Data

Data quality problems in manufacturing are not always obvious. They don't trigger alarms. They don't halt production. They silently degrade model performance until a team concludes that "AI doesn't work for our process." In reality, the process wasn't the problem — the data was. Here are the four most common issues we see across factories.

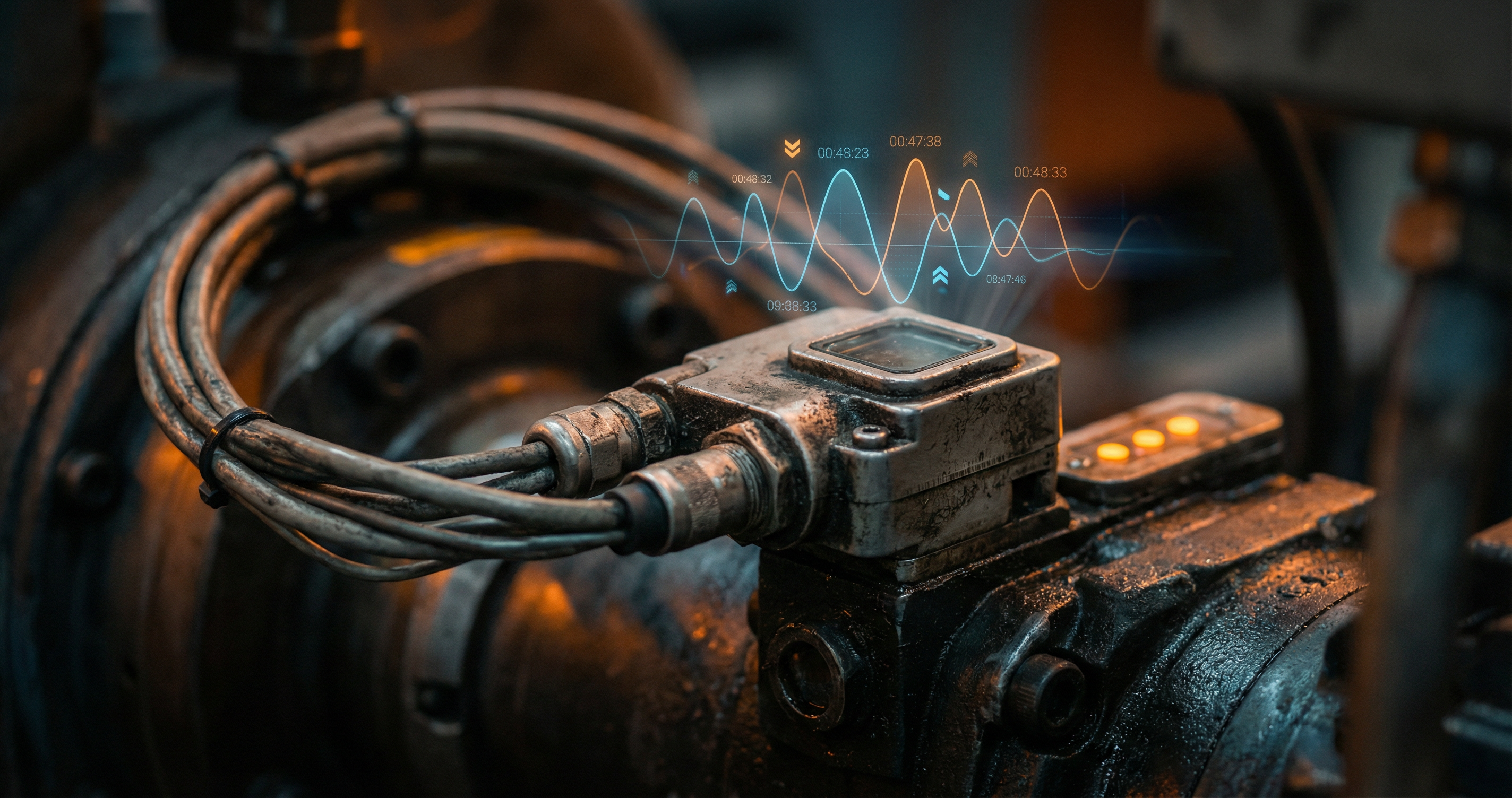

Sensor drift is perhaps the most insidious. A temperature sensor that was calibrated six months ago may now read 2°C too high. A vibration sensor mounted on a motor housing gradually shifts its baseline as the mounting loosens imperceptibly. These drifts are small enough that operators never notice, but large enough that a machine learning model trained on historical data will produce incorrect predictions. Without systematic recalibration tracking, sensor drift poisons your dataset quietly over time.

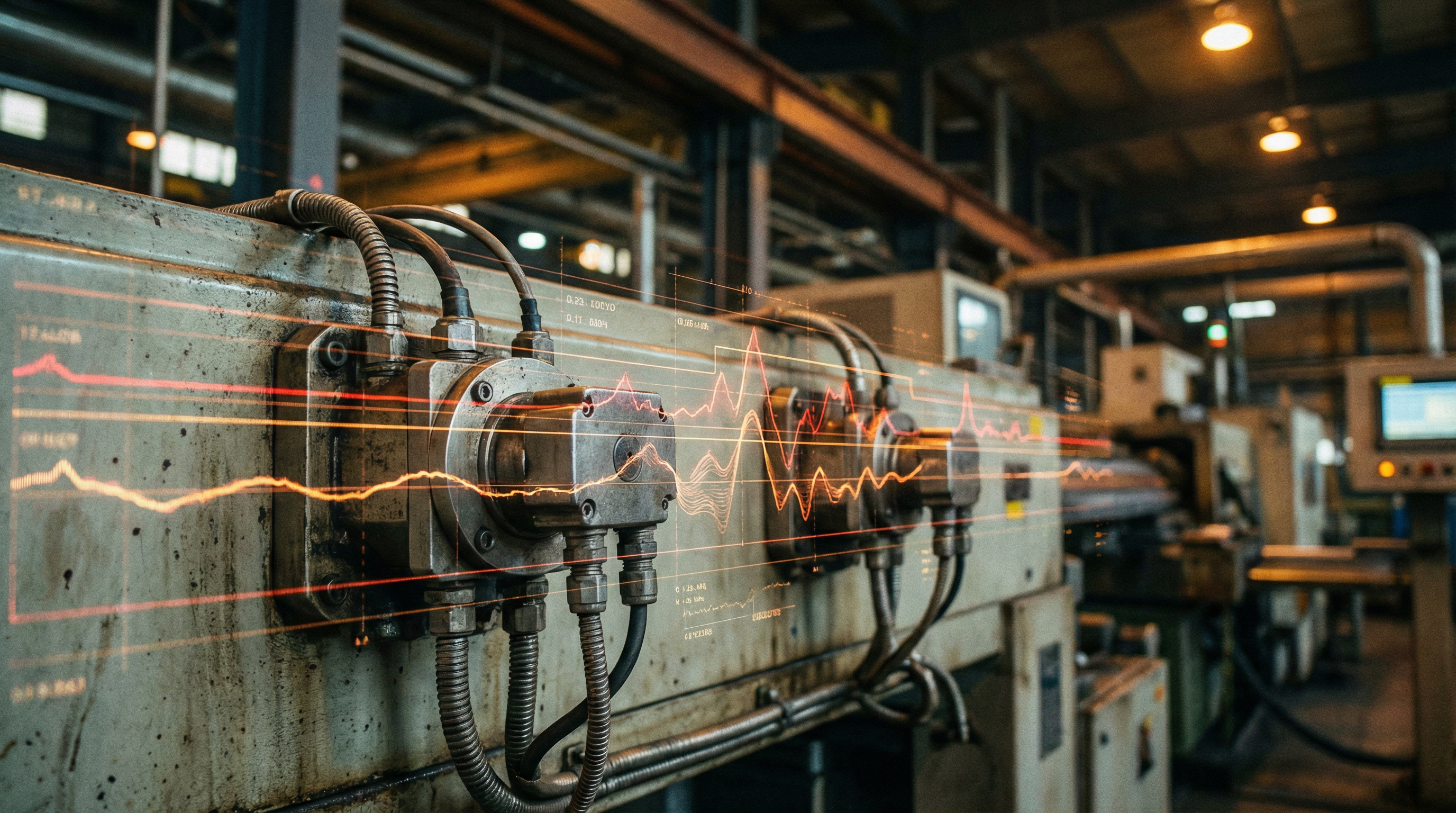

Timestamp misalignment is the second killer. A PLC records a spindle speed change at 10:03:22. The MES logs a quality event at 10:03:25. The test station records a measurement at 10:03:28. Are these three events related? It depends entirely on whether the clocks are synchronized — and in most factories, they are not. PLCs, SCADA systems, MES platforms, and edge devices each maintain their own clocks, sometimes drifting by seconds, occasionally by minutes. When you try to correlate cause and effect across systems, misaligned timestamps make it nearly impossible to build reliable training data.

Missing labels present the third challenge. Supervised machine learning requires labeled data — you need to know which production runs were good and which had defects, which machine states led to failures and which were normal. But in manufacturing, failures are rare events. Quality outcomes are often recorded hours or days after production. Root causes are ambiguous, documented inconsistently, or not documented at all. Building a labeled dataset for a process that produces 0.3% defect rates means you need thousands of production cycles just to get a statistically meaningful number of failure examples.

Context gaps are the fourth and most overlooked issue. A vibration reading of 4.2 mm/s means something entirely different during machine startup than during steady-state production. The same current draw on a motor tells a different story when the machine is running a roughing operation versus a finishing pass. Without operational context — the recipe, the tool condition, the material batch, the production phase — raw sensor data is ambiguous. Models trained without this context learn patterns that don't generalize, because they're fitting to noise rather than process physics.

Why Traditional Approaches Fall Short

The standard response to data quality problems is to hire a data engineering team, build ETL pipelines, and clean the data centrally. This approach has three fundamental flaws in a manufacturing environment:

- Data engineers don't understand the process. They can normalize formats and remove obvious outliers, but they cannot determine whether a temperature spike at timestamp X is sensor drift, a legitimate process change, or a startup transient. Only someone who understands the machine can make that judgment.

- Centralized cleaning is too slow. By the time a data pipeline is built, validated, and deployed for one use case, the process has already changed — new tooling, different material batches, updated recipes. The pipeline is always chasing a moving target.

- It doesn't scale. Every new AI use case requires its own data preparation workflow. The second use case takes almost as long as the first, because the underlying data problems are addressed downstream instead of at the source.

Fixing Data at the Source With No-Code Tools

The breakthrough happens when you put data preparation tools directly into the hands of the people who understand the process: the process engineers, the quality managers, the machine operators. These are the people who know that sensor 7 on line 3 has been drifting since the last maintenance window. They know that the first 45 seconds after a tool change always produce abnormal vibration readings. They know which material batches behave differently.

This is exactly why we built the RockQ ML Studio as a visual, no-code environment. Process engineers can inspect signal data graphically, identify and remove drift periods, align timestamps across systems, tag operational context, and label quality outcomes — all without writing a single line of code. The data gets fixed where the knowledge lives, not in a disconnected data engineering silo three departments away.

The impact is measurable. When domain experts prepare the data, models train faster, generalize better, and require far fewer iterations to reach production-ready accuracy. More importantly, the data preparation workflow becomes reusable. The same cleaning logic that prepares data for a predictive maintenance model can be adapted for a quality prediction model on the same line, because the underlying data issues are resolved at the source rather than patched in a one-off pipeline.

Manufacturing data will never be perfect. Sensors will drift, clocks will misalign, labels will be incomplete. The question is not whether these problems exist — they always will. The question is whether your organization can identify and address them fast enough, with the right people, using tools that match their expertise. That is the real foundation of manufacturing AI.